kubesprayto282

Jan 31, 2019

Technology

AIM

Since kubespray has released v2.8.2, I write this article for recording steps of upgrding from v2.8.1 to v2.8.2 of my offline solution.

Prerequisites

Download the v2.8.2 release from:

https://github.com/kubernetes-sigs/kubespray/releases

# wget https://github.com/kubernetes-sigs/kubespray/archive/v2.8.2.tar.gz

# tar xzvf v2.8.2.tar.gz

# cd kubespray-2.8.2

Modification

1. cluster.yml

three changes made.

a. added kube-deploy role.

b. disable instal container-engine(cause we will directly install docker).

c. create role for k8s dashboard.

- { role: bastion-ssh-config, tags: ["localhost", "bastion"]}

# Notice 1, we combine deploy.yml here.

- hosts: kube-deploy

gather_facts: false

roles:

- kube-deploy

# Notice 1 ends here.

- hosts: k8s-cluster:etcd:calico-rr:!kube-deploy

any_errors_fatal: "{{ any_errors_fatal | default(true) }}"

.......

- { role: kubernetes/preinstall, tags: preinstall }

#- { role: "container-engine", tags: "container-engine", when: deploy_container_engine|default(true) }

- { role: download, tags: download, when: "not skip_downloads" }

.......

- { role: kubernetes-apps, tags: apps }

- hosts: kube-master[0]

tasks:

- name: "Create clusterrolebinding"

shell: kubectl create clusterrolebinding cluster-admin-fordashboard --clusterrole=cluster-admin --user=system:serviceaccount:kube-system:kubernetes-dashboard

2. Vagrantfile

10 Changes made.

a. Added customized vagrant box(vagrant-libvirt).

b. Added kube-deploy instance, which will automatically added into vagrantfile.

c. Added disks, we will use glusterfs.

d. We specify the system, and enable calico instead of flannel.

e. Added dns.sh for customized the offline environment dns.

f. Added vagrant-libvirt items.

g. Disable sync folder.

h. Call dns.sh when every vm startup.

i. Disable some download items, and specify the ansible python interpreter.

j. Let ansible become root, and disable ask-pass prompt, also add kube-deploy into vagrant generated inventory file.

"opensuse-tumbleweed" => {box: "opensuse/openSUSE-Tumbleweed-x86_64", user: "vagrant"},

"wukong" => {box: "kubespray", user: "vagrant"},

"wukong1804" => {box: "rong1804", user: "vagrant"},

}

......

$kube_master_instances = $num_instances == 1 ? $num_instances : ($num_instances - 1)

$kube_deploy_instances = 1

# All nodes are kube nodes

......

$kube_node_instances_with_disks = true

$kube_node_instances_with_disks_size = "40G"

......

$os = "wukong"

$network_plugin = "calico"

......

FileUtils.ln_s($inventory, File.join($vagrant_ansible,"inventory"))

end

end

File.open('./dns.sh' ,'w') do |f|

f.write "#!/bin/bash\n"

f.write "sed -i '/^#VAGRANT-END/i dns-nameservers 10.148.129.101' /etc/network/interfaces\n"

f.write "systemctl restart networking.service\n"

#f.write "rm -f /etc/resolv.conf\n"

#f.write "ln -s /run/systemd/resolve/resolv.conf /etc/resolv.conf\n"

#f.write "echo ' nameservers:'>>/etc/netplan/50-vagrant.yaml\n"

#f.write "echo ' addresses: [10.148.129.101]'>>/etc/netplan/50-vagrant.yaml\n"

#f.write "netplan apply eth1\n"

end

......

lv.default_prefix = 'rong1804node'

lv.cpu_mode = 'host-passthrough'

lv.storage_pool_name = 'default'

lv.disk_bus = 'virtio'

lv.nic_model_type = 'virtio'

lv.volume_cache = 'writeback'

# Fix kernel panic on fedora 28

......

node.vm.synced_folder ".", "/vagrant", disabled: true, type: "rsync", rsync__args: ['--verbose', '--archive', '--delete', '-z'] , rsync__exclude: ['.git','venv']

$shared_folders.each do |src, dst|

......

node.vm.provision "shell", inline: "swapoff -a"

# Change the dns-nameservers

node.vm.provision :shell, path: "dns.sh"

......

#"docker_keepcache": "1",

#"download_run_once": "True",

#"download_localhost": "False",

"ansible_python_interpreter": "/usr/bin/python3"

......

ansible.become_user = "root"

ansible.limit = "all"

ansible.host_key_checking = false

#ansible.raw_arguments = ["--forks=#{$num_instances}", "--flush-cache", "--ask-become-pass"]

ansible.host_vars = host_vars

#ansible.tags = ['download']

ansible.groups = {

"kube-deploy" => ["#{$instance_name_prefix}-[1:#{$kube_deploy_instances}]"],

3. inventory folder

Remove the hosts.ini file under inventory/sample/.

Edit some content in sample folder:

inventory/sample/group_vars/k8s-cluster/k8s-cluster.yml:

kubelet_deployment_type: host

helm_deployment_type: host

helm_stable_repo_url: "http://portus.ddddd.com:5000/chartrepo/kubesprayns"

helm/metric server :

helm_enabled: true

# Metrics Server deployment

metrics_server_enabled: true

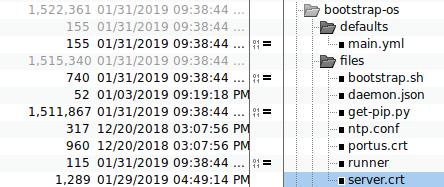

4. role bootstrap-os

- Copy

daemon.json,ntp.conf,portus.crt,server.crtto kubespray-2.8.2.

- bootstrap-ubuntu.yml changes

For disable auto-update, and configure intranet repository.

# Bug-fix1, disable auto-update for releasing apt controlling

- name: "Configure apt service status"

raw: systemctl stop apt-daily.timer;systemctl disable apt-daily.timer ; systemctl stop apt-daily-upgrade.timer ; systemctl disable apt-daily-upgrade.timer; systemctl stop apt-daily.service; systemctl mask apt-daily.service; systemctl daemon-reload

- name: "Sed configuration"

raw: sed -i 's/APT::Periodic::Update-Package-Lists "1"/APT::Periodic::Update-Package-Lists "0"/' /etc/apt/apt.conf.d/10periodic

- name: Configure intranet repository

raw: echo "deb [trusted=yes] http://portus.dddd.com:8888 ./">/etc/apt/sources.list && apt-get update -y && mkdir -p /usr/local/static

- name: List ubuntu_packages

Install more packages:

- python-pip

- ntp

- dbus

- docker-ce

- build-essential

Upload the crt files, add configuration files for docker, docker login ,etc.

- name: "upload portus.crt and server.crt files to kube-deploy"

copy:

src: "{{ item }}"

dest: /usr/local/share/ca-certificates

owner: root

group: root

mode: 0777

with_items:

- files/portus.crt

- files/server.crt

- name: "upload daemon.json files to kube-deploy"

copy:

src: files/daemon.json

dest: /etc/docker/daemon.json

owner: root

group: root

mode: 0777

- name: "update ca-certificates"

shell: update-ca-certificates

- name: ensure docker service is restarted and enabled

service:

name: "{{ item }}"

enabled: yes

state: restarted

with_items:

- docker

- name: Docker login portus.dddd.com

shell: docker login -u kubespray -p Thinker@1 portus.dddd.com:5000

- name: Docker login docker.io

shell: docker login -u kubespray -p thinker docker.io

- name: Docker login quay.io

shell: docker login -u kubespray -p thinker quay.io

- name: Docker login gcr.io

shell: docker login -u kubespray -p thinker gcr.io

- name: Docker login k8s.gcr.io

shell: docker login -u kubespray -p thinker k8s.gcr.io

- name: Docker login docker.elastic.co

shell: docker login -u kubespray -p thinker docker.elastic.co

- name: "upload ntp.conf"

copy:

src: files/ntp.conf

dest: /etc/ntp.conf

owner: root

group: root

mode: 0777

- name: "Configure ntp service"

shell: systemctl enable ntp && systemctl start ntp

- set_fact:

ansible_python_interpreter: "/usr/bin/python"

tags:

- facts

5. role download

Using local server for downloading:

# Download URLs

#kubeadm_download_url: "https://storage.googleapis.com/kubernetes-release/release/{{ kubeadm_version }}/bin/linux/{{ image_arch }}/kubeadm"

#hyperkube_download_url: "https://storage.googleapis.com/kubernetes-release/release/{{ kube_version }}/bin/linux/amd64/hyperkube"

etcd_download_url: "https://github.com/coreos/etcd/releases/download/{{ etcd_version }}/etcd-{{ etcd_version }}-linux-amd64.tar.gz"

#cni_download_url: "https://github.com/containernetworking/plugins/releases/download/{{ cni_version }}/cni-plugins-{{ image_arch }}-{{ cni_version }}.tgz"

kubeadm_download_url: "http://portus.dddd.com:8888/kubeadm"

hyperkube_download_url: "http://portus.dddd.com:8888/hyperkube"

cni_download_url: "http://portus.dddd.com:8888/cni-plugins-{{ image_arch }}-{{ cni_version }}.tgz"

Offline role

Create an offline folder under role:

# mkdir -p roles/kube-deploy

Under this folder we got following files:

# tree .

.

├── files

│ ├── 1604debs.tar.xz

│ ├── 1804debs.tar.xz

│ ├── autoindex.tar.xz

│ ├── ban_nouveau.run

│ ├── busybox1262.tar.xz

│ ├── cni-plugins-amd64-v0.6.0.tgz

│ ├── cni-plugins-amd64-v0.7.0.tgz

│ ├── compose.tar.gz

│ ├── daemon.json

│ ├── dashboard.tar.xz

│ ├── data.tar.gz

│ ├── db.docker.io

│ ├── db.elastic.co

│ ├── db.gcr.io

│ ├── db.quay.io

│ ├── db.dddd.com

│ ├── deploy.key

│ ├── deploy.key.pub

│ ├── deploy_local.repo

│ ├── dns.sh

│ ├── docker-compose

│ ├── docker-infra.service

│ ├── fileserver.tar.gz

│ ├── harbor.tar.xz

│ ├── hyperkube

│ ├── install.run

│ ├── kubeadm

│ ├── kubeadm.official

│ ├── mynginx.service

│ ├── named.conf.default-zones

│ ├── nginx19.tar.xz

│ ├── nginx.tar

│ ├── nginx.yaml

│ ├── ntp.conf

│ ├── pipcache.tar.gz

│ ├── portus.crt

│ ├── portus.tar.xz

│ ├── registry2.tar.xz

│ ├── secureregistryserver.service

│ ├── secureregistryserver.tar

│ ├── server.crt

│ └── tag_and_push.sh

└── tasks

├── deploy-centos.yml

├── deploy-ubuntu.yml

└── main.yml

2 directories, 45 files

Now using old file, for setting up a cluster, now login to kube-deploy server. do following steps:

# systemctl stop secureregistryserver

# docker ps | grep secureregistryserver

# rm -rf /usr/local/secureregistryserver/data/

# mkdir /usr/local/secureregistryserver/data

# systemctl start secureregistryserver

# docker ps | grep secureregistry

7ce648336f22 nginx:1.9 "nginx -g 'daemon of…" 3 seconds ago Up 1 second 80/tcp, 0.0.0.0:443->443/tcp secureregistryserver_nginx_1_ce6348edc346

9258c0396001 registry:2 "/entrypoint.sh /etc…" 4 seconds ago Up 2 seconds 127.0.0.1:5050->5000/tcp secureregistryserver_registry_1_76f71f58f22d

Load the kubespray images and push to secureregistryserver:

# docker load<combine.tar.xz

# docker push *****************

# docker push *****************

# docker push *****************

Load the kubeadm related images and push to secureregistryserver:

# docker load<kubeadm.tar

# docker push *****************

# docker push *****************

# docker push *****************

Now stop the secureregistryserver service and make the tar file:

# systemctl stop secureregistryserver

# cd /usr/local/

# tar cvf secureregistryserver.tar secureregistryserver

The secureregistryserver.tar should be replaced into the kube-deploy/files folder

Replace the kubeadm( we add 100 years ssl signature):

# sha256sum kubeadm

33ef160d8dd22e7ef6eb4527bea861218289636e6f1e3e54f2b19351c1048a07 kubeadm

# vim /var1/sync/kubespray282/kubespray282/kubespray-2.8.2/roles/download/defaults/main.yml

kubeadm_checksums:

v1.12.5: 33ef160d8dd22e7ef6eb4527bea861218289636e6f1e3e54f2b19351c1048a07

Replaces the hyperkube:

# wget https://storage.googleapis.com/kubernetes-release/release/v1.12.5/bin/linux/amd64/hyperkube

Replace New Items

!!!Rechecked here!!!

debs.tar.xz should be replaced(Currently we use the old ones)

secureregistryserver.tar contains all of the offline docker images, must be replaced.

kubeadm should be replaced, and checksums should be updated.

hyperkube should be replaced.

Using vps for fetching images

Under a vps, download the kubespray-2.8.2.tar.gz, and made some modifications for syncing back the images:

Create a new sync.yml file:

root@vpsserver:~/Code/kubespray-2.8.2# cat sync.yml

---

- hosts: k8s-cluster:etcd:calico-rr

any_errors_fatal: "{{ any_errors_fatal | default(true) }}"

roles:

- { role: kubespray-defaults}

- { role: sync, tags: download, when: "not skip_downloads" }

environment: "{{proxy_env}}"

Copy the sample folder under inventory to a new name sync:

root@vpsserver:~/Code/kubespray-2.8.2# cat inventory/sync/hosts.ini

# ## Configure 'ip' variable to bind kubernetes services on a

# ## different ip than the default iface

# ## We should set etcd_member_name for etcd cluster. The node that is not a etcd member do not need to set the value, or can set the empty string value.

[all]

vpsserver ansible_ssh_host=1xxx.xxx.xxx.xx ansible_user=root ansible_ssh_private_key_file="/root/.ssh/id_rsa" ansible_port=22

[kube-master]

vpsserver

[etcd]

vpsserver

[kube-node]

vpsserver

[k8s-cluster:children]

kube-master

kube-node

Copy roles/download to roles/sync, and make some changes, notice all of the downloads docker images should changes to enabled:true:

root@vpsserver:~/Code/kubespray-2.8.2/roles# ls

adduser bootstrap-os dnsmasq etcd kubernetes-apps network_plugin reset upgrade

bastion-ssh-config container-engine download kubernetes kubespray-defaults remove-node sync win_nodes

root@vpsserver:~/Code/kubespray-2.8.2/roles# cd sync/

root@vpsserver:~/Code/kubespray-2.8.2/roles/sync# ls

defaults meta tasks

root@vpsserver:~/Code/kubespray-2.8.2/roles/sync# cat defaults/main.yml

downloads:

netcheck_server:

#enabled: "{{ deploy_netchecker }}"

enabled: true

container: true

repo: "{{ netcheck_server_image_repo }}"

tag: "{{ netcheck_server_image_tag }}"

sha256: "{{ netcheck_server_digest_checksum|default(None) }}"

groups:

- k8s-cluster

netcheck_agent:

#enabled: "{{ deploy_netchecker }}"

enabled: true

container: true

repo: "{{ netcheck_agent_image_repo }}"

tag: "{{ netcheck_agent_image_tag }}"

sha256: "{{ netcheck_agent_digest_checksum|default(None) }}"

groups:

- k8s-cluster

Disalbe Sync containers tasks, so we will only download the docker images to local.

root@vpsserver:~/Code/kubespray-2.8.2/roles/sync# cat tasks/main.yml

---

- include_tasks: download_prep.yml

when:

- not skip_downloads|default(false)

- name: "Download items"

include_tasks: "download_{% if download.container %}container{% else %}file{% endif %}.yml"

vars:

download: "{{ download_defaults | combine(item.value) }}"

with_dict: "{{ downloads }}"

when:

- not skip_downloads|default(false)

- item.value.enabled

- (not (item.value.container|default(False))) or (item.value.container and download_container)

#- name: "Sync container"

# include_tasks: sync_container.yml

# vars:

# download: "{{ download_defaults | combine(item.value) }}"

# with_dict: "{{ downloads }}"

# when:

# - not skip_downloads|default(false)

# - item.value.enabled

# - item.value.container | default(false)

# - download_run_once

# - group_names | intersect(download.groups) | length

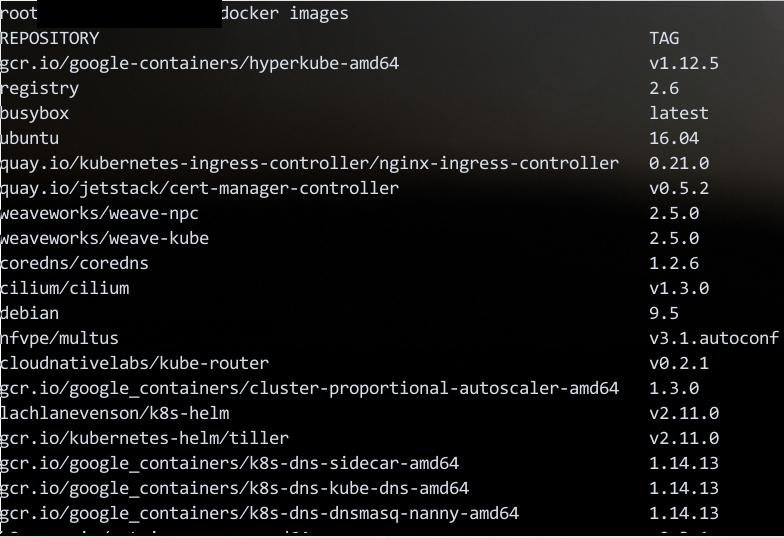

All of the kubespray pre-defined images will be downloaded to vps, check via:

Save it using scripts:

# docker save -o combine.tar gcr.io/google-containers/hyperkube-amd64:v1.12.5 registry:2.6 busybox:latest quay.io/kubernetes-ingress-controller/nginx-ingress-controller:0.21.0 quay.io/jetstack/cert-manager-controller:v0.5.2 weaveworks/weave-npc:2.5.0 weaveworks/weave-kube:2.5.0 coredns/coredns:1.2.6 cilium/cilium:v1.3.0 nfvpe/multus:v3.1.autoconf cloudnativelabs/kube-router:v0.2.1 gcr.io/google_containers/cluster-proportional-autoscaler-amd64:1.3.0 lachlanevenson/k8s-helm:v2.11.0 gcr.io/kubernetes-helm/tiller:v2.11.0 gcr.io/google_containers/k8s-dns-sidecar-amd64:1.14.13 gcr.io/google_containers/k8s-dns-kube-dns-amd64:1.14.13 gcr.io/google_containers/k8s-dns-dnsmasq-nanny-amd64:1.14.13 k8s.gcr.io/metrics-server-amd64:v0.3.1 quay.io/external_storage/cephfs-provisioner:v2.1.0-k8s1.11 gcr.io/google_containers/kubernetes-dashboard-amd64:v1.10.0 busybox:1.29.2 quay.io/coreos/etcd:v3.2.24 k8s.gcr.io/addon-resizer:1.8.3 quay.io/calico/node:v3.1.3 quay.io/calico/ctl:v3.1.3 quay.io/calico/kube-controllers:v3.1.3 quay.io/calico/cni:v3.1.3 nginx:1.13 quay.io/external_storage/local-volume-provisioner:v2.1.0 quay.io/calico/routereflector:v0.6.1 quay.io/coreos/flannel:v0.10.0 contiv/netplugin:1.2.1 contiv/auth_proxy:1.2.1 gcr.io/google_containers/pause-amd64:3.1 ferest/etcd-initer:latest andyshinn/dnsmasq:2.78 quay.io/coreos/flannel-cni:v0.3.0 xueshanf/install-socat:latest quay.io/l23network/k8s-netchecker-agent:v1.0 quay.io/l23network/k8s-netchecker-server:v1.0 gcr.io/google_containers/kube-registry-proxy:0.4

# xz combine.tar

Using vagrant for fetching kubeadm

Your vagrant box should cross gfw, then you use a 1604 box for create an all-in-one k8s cluster.

After provision, use following command for saving all of the images:

# docker images

# docker save -o combine.tar xxxxx xxx xxx xxx xxx xxx